What are Weights and Biases in Neural Networks

Published: 1 Mar 2026

Weights and biases are the two core parameters that control how a neural network processes data and makes predictions. Together, they determine the strength of connections between neurons and the flexibility of activation thresholds, making them the foundation of how AI models learn from training data.

Neural networks use weights and biases to identify patterns in data across 4 primary application areas: image recognition, natural language processing (NLP), autonomous vehicles, and healthcare diagnostics. During training, weights and biases are adjusted repeatedly through forward propagation and backpropagation to reduce prediction errors and increase accuracy.

Understanding how Artificial Intelligence weights and biases work helps explain how neural networks learn, why AI bias occurs, and how algorithmic bias can be detected and mitigated. This article covers what weights and biases are, why they matter, how neural networks learn using them, and the real-world applications and challenges they present.

Table of Contents

What are Weights and Biases?

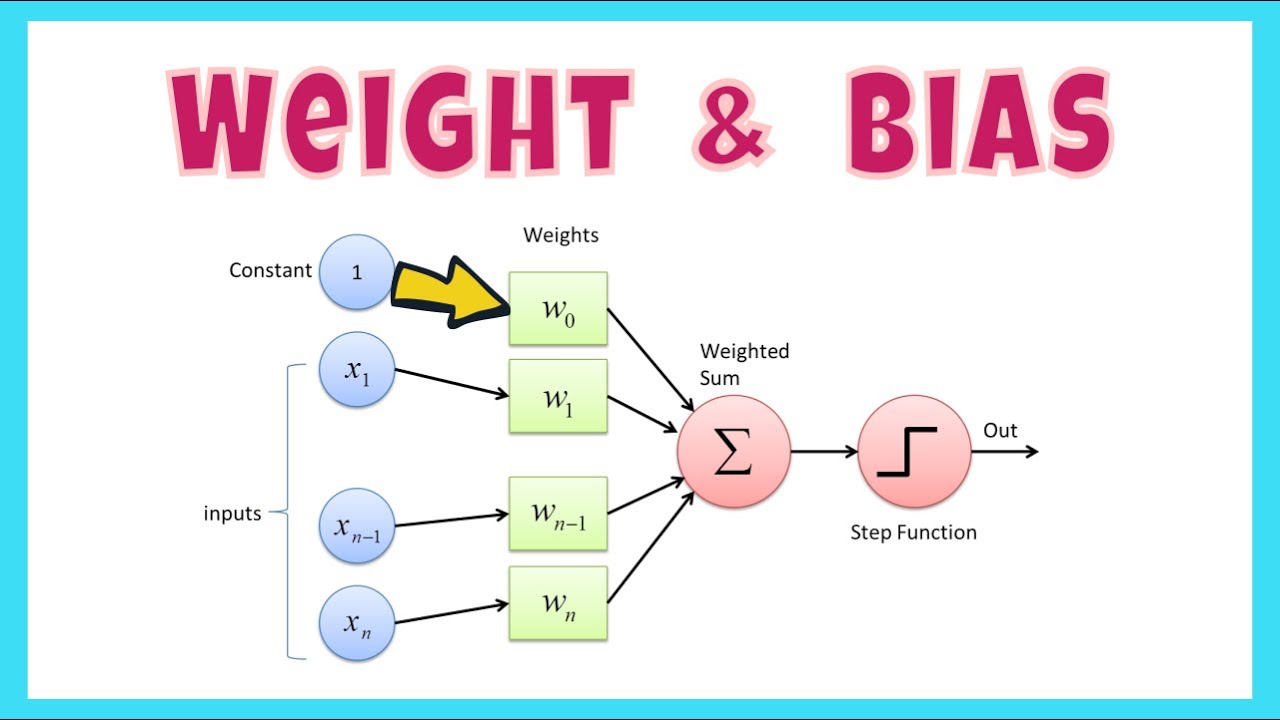

Weights and biases are learnable parameters in a neural network that control how input data is processed and transformed into an output. Weights determine the importance of each input signal, while biases provide a constant adjustment to the neuron’s output independent of any input.

Weights

Weights are numerical values assigned to the connections between neurons. Each weight controls how much influence a specific input has on the neuron’s output.

There are 3 primary roles weights play in a neural network:

- Signal strength: Weights scale each input signal. A larger weight gives more influence to that input; a smaller weight reduces its impact on the output.

- Learning mechanism: During training, weights are updated iteratively through optimization algorithms like gradient descent to minimize the gap between predicted and actual outcomes.

- Generalization: Well-tuned weights allow the network to make accurate predictions on new, unseen data, not just training data.

Example: In a neural network predicting house prices, the weight assigned to “house size” controls how much influence square footage has on the price prediction. A high weight means house size strongly drives the output. A low weight means other features carry more importance.

Biases

Biases are additional parameters that adjust a neuron’s output independently of its inputs. Unlike weights, a bias is not tied to any specific input—it shifts the activation function to better fit the data.

There are 3 key functions that biases serve:

- Activation flexibility: Biases allow neurons to activate even when the weighted sum of inputs is zero or very small. This lets the network recognize patterns that don’t pass through the origin.

- Range extension: Without biases, neurons would only activate when inputs reach a precise threshold. Biases allow activation across a wider range of conditions.

- Training refinement: Biases update alongside weights during backpropagation, fine-tuning the model, and improving prediction accuracy.

Example: In a house price prediction network, the bias ensures that a house with zero square footage still receives a non-zero predicted price. This non-zero baseline reflects fixed costs like land value and baseline construction expenses.

Why are Weights and Biases Important?

Weights and biases are the core mechanisms by which a neural network learns. Without them, a network cannot adjust its outputs based on data, generalize to new inputs, or reduce prediction errors over time.

There are 3 reasons weights and biases are fundamental to neural network function:

- They control the signal strength. Weights set the influence each input data point has on the final output. Biases give the network an independent value to shift outputs, ensuring the model can activate even when inputs are minimal.

- They enable learning. Weights and biases are the only parameters that change during training. Adjusting them through backpropagation is how the network gets better at predictions over time.

- They determine generalization. Properly tuned weights and biases allow a neural network to apply learned patterns to data it has never seen before, which is the primary goal of training.

How Neural Networks Learn?

Neural networks learn through a two-phase cycle: forward propagation and backpropagation. Each training cycle runs forward to generate a prediction, then backward to measure and reduce the error.

Forward Propagation

Forward propagation is the process of passing input data through each layer of a neural network to produce a prediction. There are 5 steps in this process:

- Input layer: Data enters through the input layer. This includes pixel values in an image, word tokens in a sentence, or numerical features in a dataset.

- Weighted sum: Each neuron multiplies every input by its corresponding weight and sums the results. This weighted sum measures the total influence of all inputs on that neuron.

- Adding biases: A bias value is added to the weighted sum. This shift allows the neuron to activate even when inputs are close to zero.

- Activation function: The weighted sum plus bias passes through an activation function—such as ReLU (Rectified Linear Unit) or sigmoid—which decides whether the neuron activates and passes data to the next layer.

- Layer propagation: The output of each layer becomes the input for the next, continuing until the final output or prediction is generated.

Backpropagation

Backpropagation is the process of adjusting weights and biases after a prediction has been made. There are 4 steps in this process:

- Error calculation: The network compares its predicted output to the actual target value. The difference is the error, also called the loss.

- Gradient calculation: The error propagates backward through the network. For each weight and bias, the network calculates the gradient—the direction and magnitude of change needed to reduce the loss.

- Updating weights and biases: Using the gradients, an optimization algorithm like gradient descent updates the weights and biases to reduce the error in future predictions.

- Iteration: The forward and backward propagation cycle repeats across many batches of training data. With each iteration, the weights and biases move closer to their optimal values.

Terms Related to Weights and Biases

There are 6 key terms closely related to weights and biases in neural networks:

- Neuron: The basic processing unit of a neural network. Each neuron receives weighted inputs, adds a bias, and passes the result through an activation function.

- Layers: A collection of neurons. The input layer receives raw data, hidden layers process it, and the output layer delivers the final prediction. Weights govern the connections between layers.

- Hidden layers: Layers between the input and output layers where neurons apply weighted inputs and biases to transform data.

- Parameters: The collective term for all weights and biases in a neural network.

- Regularization: A technique that prevents weights from growing too large. Large, unmanageable weights cause overfitting—where the model performs well on training data but fails on new data. Regularization keeps weights in a stable, optimal range.

Activation and Loss Functions

2 core functions work directly with weights and biases during training:

- Activation function: Applied to the weighted sum plus bias at each neuron. Activation functions like ReLU and sigmoid decide whether a neuron’s signal moves forward to the next layer. ReLU outputs zero for negative values and passes positive values unchanged. Sigmoid maps values to a range between 0 and 1, useful for binary classification.

- Loss function: Measures the difference between the network’s predicted output and the correct target value. The loss guides backpropagation—the larger the loss, the larger the weight adjustments required.

Examples of Weights and Biases

Two clear examples demonstrate how weights and biases work in practice.

Letter recognition: To train a model to identify the letter “C,” the network must detect 3 shapes: a top curve, a left-leaning line, and a bottom curve. Weights control how important each detected shape is. If the weight on the top curve is high, detecting that curve strongly pushes the network toward predicting “C.” Biases ensure the neuron can still activate even if one shape is partially obscured.

House price prediction: In a neural network predicting house prices, a weight on “number of bedrooms” determines how strongly bedroom count drives the predicted price. A weight near zero means bedrooms barely affect the output. A high weight means bedroom count is a primary pricing factor. The bias adds a baseline price—such as the land value—to every prediction, regardless of the number of bedrooms.

Advantages of Weights and Biases

There are 5 key advantages weights and biases provide to neural networks:

- Data-driven learning: Weights and biases adjust automatically based on training data, enabling the network to learn patterns without manual programming.

- Output flexibility: Biases allow neurons to activate under a wide range of input conditions, making the network more adaptable to complex and irregular data.

- Improved accuracy over time: Iterative updates to weights and biases through backpropagation reduce prediction errors, increasing model accuracy with each training cycle.

- Better generalization: Properly tuned weights and biases help the network perform accurately on new, unseen data—not just the dataset used for training.

- Complex pattern recognition: Weights and biases allow neural networks to capture intricate patterns in data—patterns that traditional rule-based algorithms cannot handle.

Challenges of Weights and Biases

There are 5 primary challenges associated with weights and biases in neural networks:

- Weight initialization: Poor weight initialization leads to slow training or suboptimal model performance. Techniques like Xavier initialization and He initialization are used to set starting weights in a stable range.

- Overfitting risk: Over-adjusting weights during training causes the model to memorize training data instead of learning generalizable patterns. Regularization techniques like dropout and L2 regularization prevent this.

- Complex tuning: Finding the right weights and biases requires significant computational resources and many training iterations. Selecting the correct learning rate for gradient descent adds further complexity.

- Algorithmic bias: AI bias occurs when training data reflects historical inequalities or sampling imbalances. The network learns those biases through its weights, leading to weight-induced outcome disparities and unfair predictions. This is also called data bias or data imprint amplification.

- Black box nature: Interpreting how individual weights and biases produce a specific output is difficult. This lack of transparency is a concern in high-stakes fields like healthcare and law, driving demand for Explainable AI (XAI) and interpretable AI models.

Real-World Applications of Neural Networks

Neural networks apply weights and biases across 4 major real-world domains.

Image Recognition

Neural networks perform image classification tasks—such as identifying cats, dogs, and facial features—by learning which visual patterns matter most.

- Weights: Weights assign importance to pixel values in the input layer. In a cat image, the network gives higher weights to features like pointed ears, whiskers, and eye shape. These weighted features guide accurate object classification.

- Biases: Biases keep the network adaptable to variations in lighting, image orientation, and position. A shift in the image does not prevent the model from correctly identifying the object.

Natural Language Processing (NLP)

In NLP tasks, including sentiment analysis, language translation, and chatbots, neural networks analyze text by weighting the importance of individual words and phrases.

- Weights: Weights determine how much influence specific words carry in a given context. The word “excellent” in a customer review receives a high positive weight during sentiment analysis, while “poor” receives a high negative weight.

- Biases: Biases allow the network to handle varied sentence structures and tones. A sentence can be phrased in multiple ways and still carry the same meaning, which the bias helps the model recognize.

Autonomous Vehicles

Self-driving cars process sensor data from cameras, radar, and lidar to make real-time driving decisions such as stopping at road signs and avoiding pedestrians.

- Weights: Weights prioritize the most relevant sensor inputs at any moment. When a pedestrian enters the frame, the network increases the weight of that signal to trigger a braking response.

- Biases: Biases help the vehicle adapt to challenging conditions like fog and nighttime driving. Even when sensor clarity decreases, the bias allows the network to maintain safe decision thresholds.

Healthcare and Medical Diagnosis

Neural networks assist medical professionals by analyzing X-rays, MRIs, and CT scans to detect anomalies and diagnose conditions.

- Weights: Weights focus the network on diagnostically significant features—such as the irregular cell patterns that indicate a tumor. High-weight regions in a scan image receive more processing attention during classification.

- Biases: Biases allow the model to remain reliable across different imaging techniques and patient body types. Variations in scan quality or patient anatomy do not prevent the network from producing accurate predictions.

Weights and Biases FAQs

What are weights and biases used for?

Weights and biases are used to train a neural network to accurately predict outputs from input data. Weights control the influence each input has on the output. Biases add a constant shift to ensure neurons activate under a wide range of conditions. Together, they adjust through backpropagation until the model’s predictions match the training targets as closely as possible.

What is a neural network?

A neural network is a computational model built to process data through multiple layers of interconnected neurons. The network has 3 main layer types: an input layer that receives raw data, hidden layers where neurons apply weighted inputs and biases to extract patterns, and an output layer that produces the final prediction. Neural networks are trained by adjusting weights and biases to minimize the difference between predicted and actual outcomes.

Can weights and biases be overused?

Yes, weights and biases can be over-adjusted during training. When a network trains too long on the same data, weights grow very large, and the model memorizes the training dataset instead of learning general patterns, a problem called overfitting. Overfitting causes poor performance on new data. Weight regularization methods, such as L2 regularization and dropout, keep weights in a manageable range and reduce overfitting risk. There is also a maximum of one bias per neuron layer, so biases have a natural structural limit.

Conclusion

Weights and biases are the two learnable parameters that define how a neural network processes input data and produces predictions. Weights control signal importance; biases provide activation flexibility. Through forward propagation and backpropagation, these parameters update continuously during training to reduce the loss and improve accuracy.

The 4 major real-world domains where AI weights and biases drive outcomes are image recognition, natural language processing, autonomous vehicles, and healthcare diagnostics. Understanding weight initialization, algorithmic bias, and AI fairness metrics is key to building models that generalize well and produce equitable results.

The main challenges, overfitting, algorithmic bias, and the black-box nature of neural networks, are addressed through regularization, data bias auditing, and explainable AI (XAI) techniques. As AI systems become more prevalent, transparency in how weights and biases shape decisions becomes increasingly important for fairness in AI and responsible deployment.

- Be Respectful

- Stay Relevant

- Stay Positive

- True Feedback

- Encourage Discussion

- Avoid Spamming

- No Fake News

- Don't Copy-Paste

- No Personal Attacks

- Be Respectful

- Stay Relevant

- Stay Positive

- True Feedback

- Encourage Discussion

- Avoid Spamming

- No Fake News

- Don't Copy-Paste

- No Personal Attacks